New preprint: PEEK — frame selection for efficient video captioning

We open sourced the code and model weights on HuggingFace, and released a live demo you can try in the browser.

Killian Steunou

I am an industrial PhD student at Institut Polytechnique de Paris and Moments Lab, working on efficient omni-modal learning for generalized video understanding.

Efficient omni-modal learning for generalized video understanding.

Efficiency, multimodal learning, tracking, and deployment-aware model design.

Scientific rigor, clear writing, reproducible pipelines, and pragmatic engineering.

News

New preprint: PEEK — frame selection for efficient video captioning

We open sourced the code and model weights on HuggingFace, and released a live demo you can try in the browser.

About

I am currently pursuing an industrial PhD in machine learning at Institut Polytechnique de Paris and Moments Lab. The work sits at the intersection of modern multimodal models and the practical limits that define whether they remain useful in the real world.

I am especially drawn to problems in computer vision and deep learning because visual perception feels foundational to intelligence, both human and artificial. I like models that can scale, adapt, and still remain legible enough to improve.

Efficient omni-modal learning for generalized video understanding, with an emphasis on scalable training and practical inference.

Open-source practices, transparent experimentation, and reproducible pipelines that other researchers can meaningfully build on.

Experience

Education

ENS Paris-Saclay

Toulouse School of Economics

University of Copenhagen

Toulouse School of Economics

Publications

We propose PEEK, a training-efficient frame selection method that distills knowledge from vision-language teachers to identify the most informative frames for video captioning — achieving competitive performance while significantly reducing the number of processed frames.

We show, theoretically and empirically, that SPCA-based classifiers are more robust than PCA-based alternatives under adversarial attack, providing a new perspective on linear dimensionality reduction as a defense mechanism.

Projects

I integrated ControlNet-inspired edge controls and SAM-based masking into joliGEN, then helped improve its documentation.

An implementation of Owl-ViT for zero-shot object detection in videos using natural-language prompts.

We reproduced 3DETR on SUN RGB-D and explored how a lean transformer detector behaves when extended with RGB information.

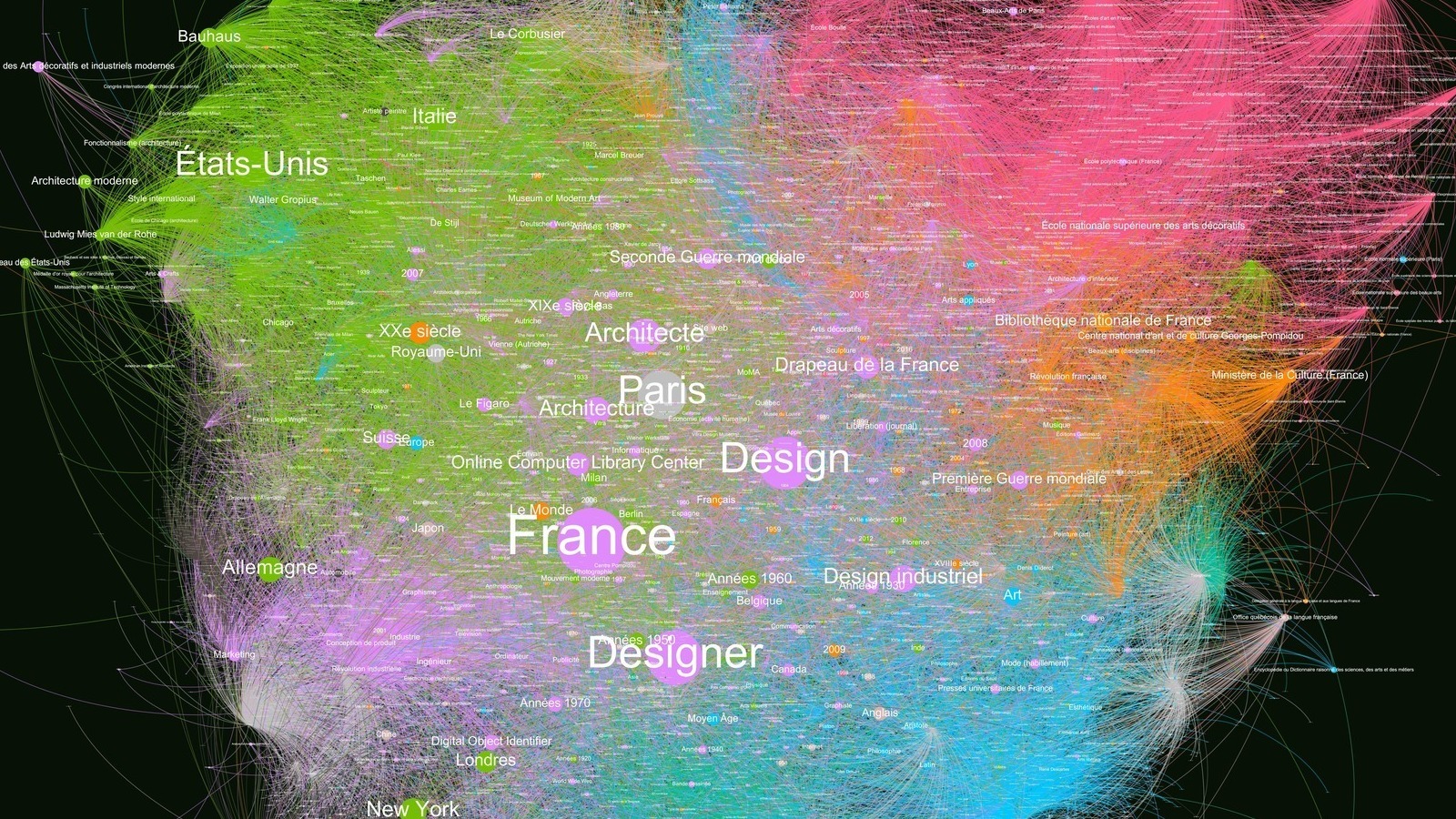

A French-language concept graph built by scraping linked Wikipedia topics and exporting the result for graph exploration.

A French word-ladder generator that computes the shortest sequence of one-letter edits between two words.

Writing

An analysis of how efficiency moved from the margins to the center of video understanding research from 2015 to 2025.

A worked visual walkthrough of n-grams, TF-IDF, BLEU, ROUGE-L, METEOR, and CIDEr for captioning research.

Demos

Select the most informative frames from a video, distilled from vision-language teachers.

Whisper-powered subtitling for audio and video with a fast demo workflow.

Interactive segmentation-based background removal for video.

Contact

I am always interested in thoughtful conversations around machine learning research, multimodal systems, visual understanding, and the engineering decisions that make models usable outside the lab.